Here was an exciting opportunity to work with the technical and research teams of a leading University in Motion and Facial Capture. They deployed cutting edge technology combined with their own proprietary software applications, designed to speed up the creative and execution process of complex visualisations and output; truly amazing. Here’s a simplistic version of the process.

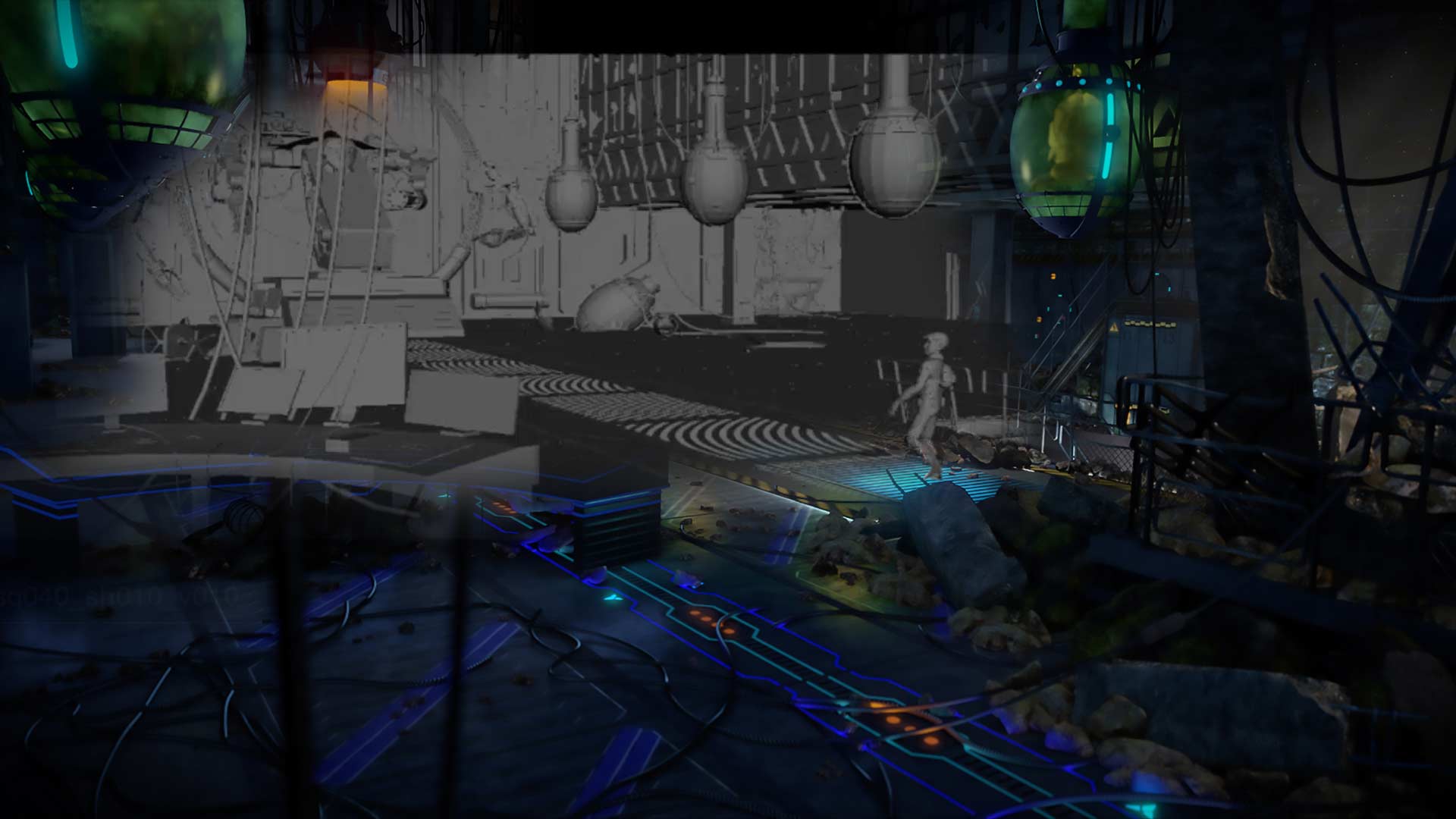

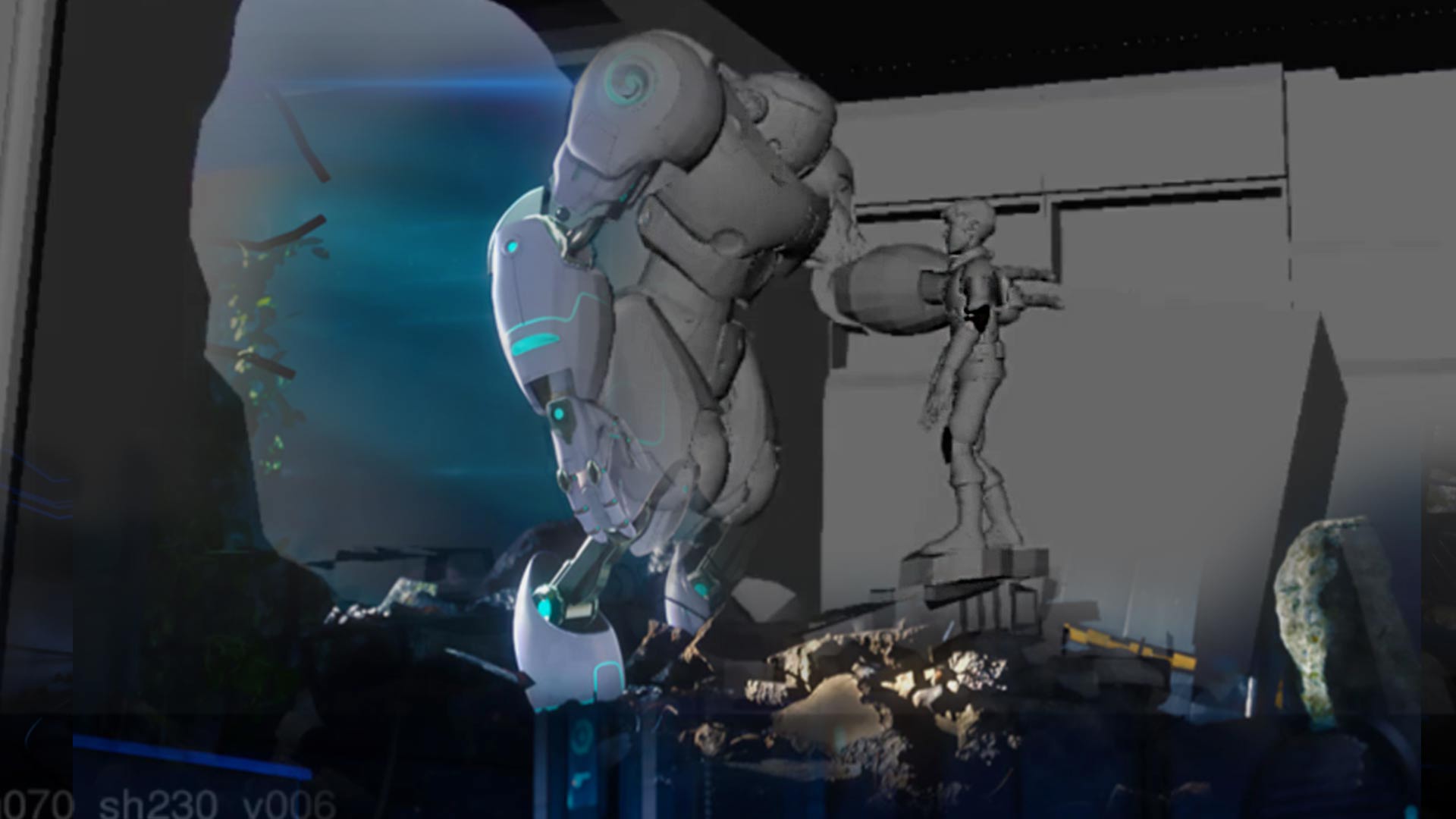

We modelled 3D characters and environments together with artistic 2-dimensional renderings of alternative environments and objects. These were imported into the software programs then lit to match the lighting conditions of environments they would eventually be placed in.

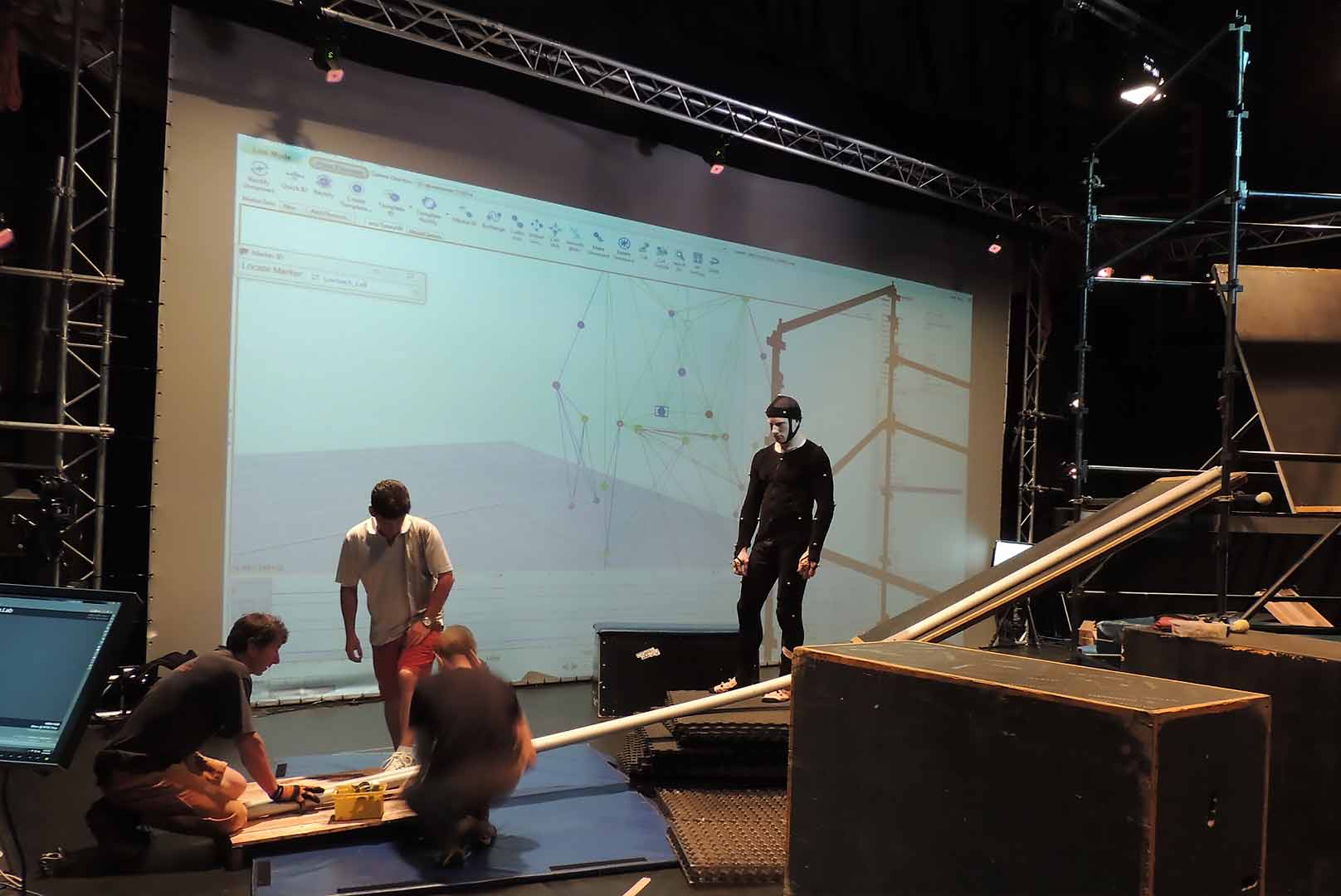

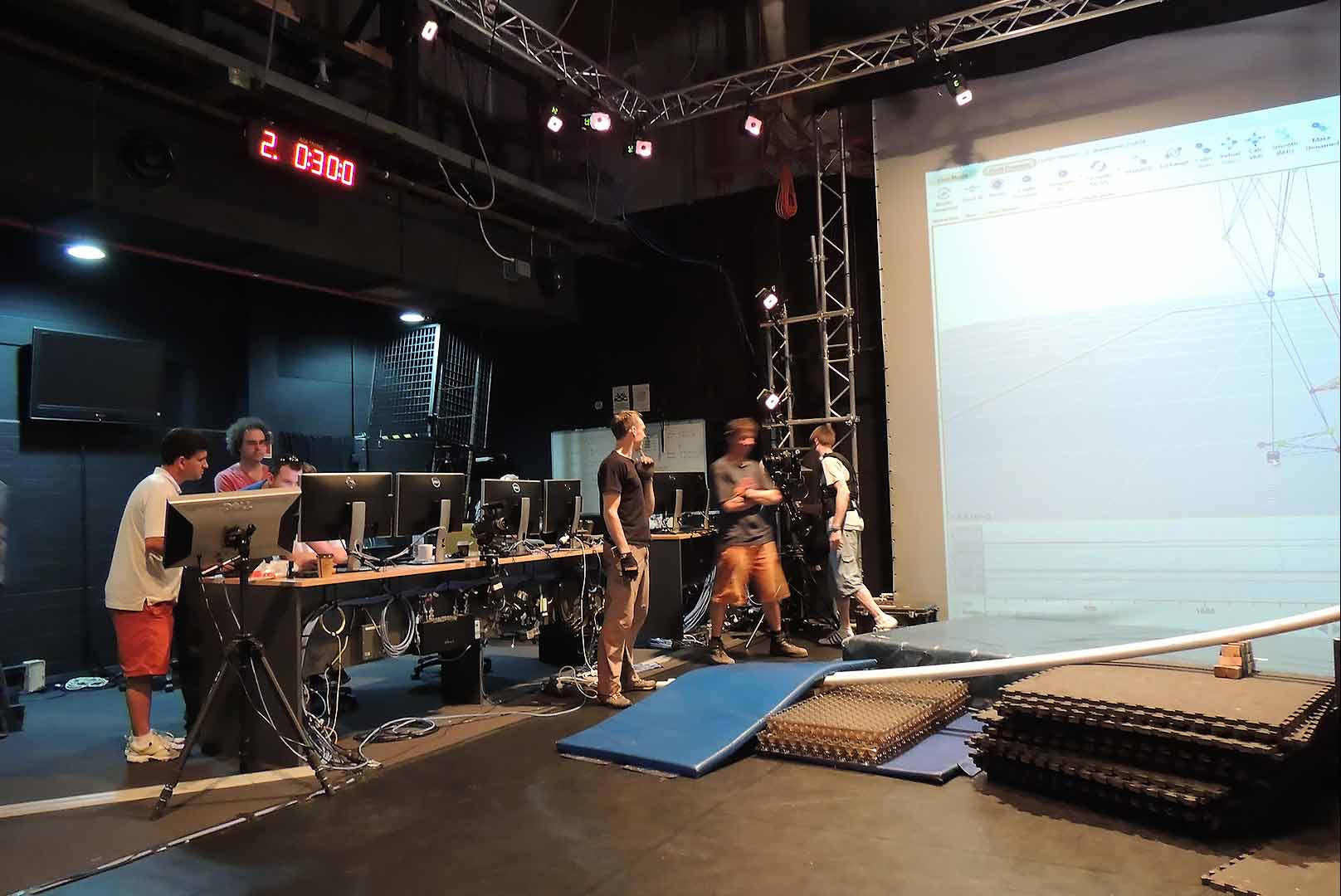

Performance actors in markered suits then enact and interact with each other in what is called a Volume, or staging area bounded by multiple cameras – up to 36 or more, aligned to match the internal perimeters of the volume. Infrared lasers from each camera mark the actor positions and motion in the volume and capture the performance data off each marker as the actors go through the rigors of performance for every action sequence. The data output is then ‘Resolved’ and given to 3D animators to manipulate and apply to respective characters in the production.

Virtual cameras – hand-held devices with screens (similar to iPads), enable a director to guide the DOP (director of photography or camera operator) and performers. to replicate exactly what would be seen in the final production. They could do camera tracking, pan, zoom, f-stop depth-of-field, focus, crane, circular dolly with perspective – and data output from the camera could be programmed to enable the entire hand-held shot, settings and movement, to be repeated as needed for additional ‘retakes’.

Now here’s the technically exciting part: this clever Team had written software to enable the Aperture to be programmed for each shot. As well, the Ambient Lighting, Key Lighting, Tracking and Direction, Temperature, Colour, Intensity and, with the use of 3D glasses, the Stereoscopic offset of the characters to optimise the resulting stereography (if required) – to ensure the audience doesn’t throw up due to excessive parallax, or the convergence of both the offset images and the perceived distance in front of or behind the screen.

I know this may all sound like gobbledygook to a lay-person, but here’s the rub. These performances give directors exactly what they want in physical and facial performance – motion fluidity and action in one fell swoop. A monumental savings in time. And an extraordinary reduction in production costs along the pipeline in film animation, or even training productions with risky performances that may seriously jeopardise the safety and well-being of actors. Think – falling from skyscrapers, crushed by falling debris, traversing mighty canyons – all in the safety of a large room!

Here’s a great example: we had characters, sets and objects uploaded from Korea, with a non-english-speaking director giving his direction via a visual link and an interpreter. Performances were enacted live as they interacted in an illustrated cartoon environment, confronting animals, interacting with other characters, walking in and around objects, street lamps and trees, over rocks and other obstacles and the entire performance output as a completed animation segment. Imagine being able to produce animated cartoon series (or anything else for that matter), in REAL TIME. This would become the new means to produce content for any production. Financially carving slabs of the cost, while delivering much higher volumes of material for the growing media channels – broadcast and online. Crikey – this was the state-of-the art!

But a storm was brewing in the mighty animation houses of the east, where labour is cheap and extensive. It was not a happy day. This technology, it’s potential displacement of labour, temporary or permanent staff layoffs in many affected areas, meant this may never see the light of day for mainstream applications. With so much production going off-shore to keep growing costs down these days, the push-back was that long-serving displaced staff may be snapped up by aggressive competitors. Consequently, businesses using such technology may be unable to recruit those staff again in future; thus become totally disadvantaged in the process. Woah – now we have winners and losers on both sides.

So, you can see that the state-of-the-art, has been usurped by the-art-of-the-State. In other words, the State loses its economic skilled-labour advantage, and we lose the potential to do great things; a fundamental technical and economic mishap! So what’s new – you say?

Perhaps it’s the time to revisit Nikola Tesla’s anti-gravity Space Drive, Micro Helices or something simple like that. Obviously the oil cartels won’t mind. We’re only displacing sand . . . and shale and . . .